The paper describing the PAWDOC digital preservation work was submitted to the Digital Preservation Coalition (DPC) on 31st May and the organisation responded saying it was interested in the paper but was currently unable to provide a timescale for dealing with it due to a busy work schedule. I guess it might be several months before hearing whether the DPC will want to publish a version of the paper.

Category Archives: Preservation Planning

Paper written – Maint Plan test to do

The follow up paper describing my recently completed preservation project, is now ready for submission to the Digital Preservation Coalition (DPC). I’m hoping that, since they published my paper describing how I derived the Preservation Planning Templates in the first place, they might be interested in taking a paper describing how they have been used in practice. We’ll see. In any case it’s good to have been able to create a summarised account of what happened while its fresh in my mind.

Writing the first draft of the paper only took about a week. However, that piece of work made me realise that the details of what got done when, appears in five main documents – the paper I was writing, the Scoping document, the Plan DESCRIPTION, the Plan CHART, and section 2 of the Preservation Maintenance Plan (Previous preservation actions taken); and that the base data for all these documents was being derived from the three major controls sheets – the DROID analysis spreadsheet, the Files-that-won’t-open spreadsheet, and the Physical Disks spreadsheet. Although the facts were roughly consistent across the documents, there were several anomalies that would be apparent to readers, and the sheer number of files and types of conversions that had been performed made it difficult to check and make revisions. I decided that the only way to achieve true consistency and traceability across all the documents would be to specify columns in the control spreadsheets for all the categories I wanted to describe, and to have the spreadsheets add up the counts automatically. This is what I spent the following two weeks doing – and a very slow and tortuous exercise it was. Which is why the paper makes several mentions of the need to set up control sheets correctly in the first place to facilitate downstream needs for control and for statistical information about what’s been done….

I was given a lot of very useful feedback on the drafts of the paper by Ross Spencer, including suggestions to include a summary timeline for the project at the beginning of the paper, to provide more details about the DROID tool, and to include some additional references. Ross also advised making it clear that this is a personal collection with preservation decisions being made that the owners were comfortable with; and that different decisions might have been made by other people from the perspective of who the future users of the Collection might be. This prompted me to include an extra paragraph in the Conclusions section to the effect that no attempt has been made to convert some files (such as old versions of the Indexing software, or a Visio stencil file) because they don’t have content and their mere presence in the collection tell their own story. However, it’s got me thinking that there is a wider point here about what collections are for, and just how much detail of the digital form needs to be preserved. I’ll probably explore this issue further in the Personal Document Management topic in this Blog.

Writing the paper also prompted me to realise that, unfortunately, my Digital Preservation Journey can’t be completed until I’ve tested out the application of a Preservation Maintenance Plan. It’s one thing to fill in a Maintenance Plan (which was relatively quick and easy), but quite another to have it initiate and direct a full blown Preservation project. Only by using it in practice will it be known if it is an effective and useful tool; and, no doubt, its use will lead to some refinements being made to its contents. I shall explore whether I could use the Maintenance Plans I produced for photos and for mementos which were created in the course of the trials conducted when putting together the first versions of the Preservation Planning Templates. If they won’t provide an adequate test, I’ll have to wait until the date specified in the PAWDOC Preservation Maintenance Plan for the next Maintenance exercise – September 2021.

PawdocDP Preservation Project Put to Bed

Last Thursday (03May) I completed the preservation project on my document collection – quite a relief to know that it is now in reasonably good shape for a few more years. To finish off this work I intend to write a follow up paper recounting how the processes and templates I developed in the earlier stages of this exercise, fared when applied to a substantial body of files. Looking back I see that I started this Preservation Planning topic nearly four years ago, so its been a long haul and very labour intensive – I’m looking forward to being able to move it to the Journeys Completed section of this blog so that I can concentrate again on more creative and exciting forays!

Disk, Reordering, and Maintenance Plan Insights

Although my last post reported that I’d got through the long slog of the conversion aspects of this preservation project, in fact there was still more slog of other sorts to go. A lot more slog in fact: there was the transfer of the contents of 126 cd/dvd disks to the laptop; and there was the reordering of pages in 881 files to rectify the page order produced by scanning all front sides first and then turning over the stack of pages to scan the reverse sides at a time in the 1990s when I didn’t have a double sided scanner. In fact this exercise involved yet more conversion (from multi-page TIF file to PDF) before the reordering could be done.

This latter task really took a huge amount of time and effort and was yet another reminder of how easy it is to specify tasks in a preservation project without really appreciating how much hard graft they will entail. Having said that, it’s worth noting that my PDF application – eCopy PDF Pro – had two functions which made this task a whole easier: first, the ability to have eCopy convert a file to PDF is available in the menu brought up by right clicking on any file, thereby automatically suggesting a file title (based on the title of the original file) for the new PDF in the Save As dialogue box, and which then automatically displays the newly created file – all of which is relatively quick and easy. Second, eCopy has a function whereby thumbnails of all the pages in a document can be displayed on the screen and each page can be dragged and dropped to a new position. I soon worked out that the front-sides-then-reverse-sides scan produces a standard order in which the last page in the file is actually page 2 of the document; and that if you drag that page to be the second page in the document, then the new last page will actually be page 4 of the document and can be dragged to just before the 4th page in the document. In effect, to reorder simply means progressively dragging the last page to before page 2 and then before page 4 and then before page 6 etc until the end of the file is reached. Both these functions (to be able to click on a file title to get it converted, and to drag and drop pages around a screenfull of thumbnails) are well worth looking for in a PDF application.

Regarding the disks, I was expecting to have trouble with some of the older ones since, during the scoping work, I had encountered a few which the laptop failed to recognise. I did try cleaning such disks with a cloth without much success. However, what did seem to work was to select ‘Computer’ on the left side of the Windows Explorer Window which displays the laptop’s own drive on the right side of the window together with the any external disks that are present. For some reason, disks which kept on whirring without seeming to be recognised, just appeared on this right side of the window. I don’t profess to understand why this was happening – but was just glad that, in the end, there was only one disk that I couldn’t get the machine to display and copy its contents.

I’m now in the much more relaxed final stages of the project, defining backup arrangements and creating the Maintenance Plan and User Guide documents. The construction of the Maintenance Plan has thrown up a couple of interesting points. First, since it requires a summary of what preservation actions have been completed and what preservation issues are to be addressed next time, it would have made life easier to construct the preservation working documents in such a way that the information for the Preservation Maintenance Plan is effectively pre-specified – an obvious point really but easy to overlook – and I did overlook it…. The second point is a more serious issue. The Maintenance Plan is designed to define a schedule of work to be undertaken every few years; its certainly not something I want to be doing very often – I’ve got other things I want to do with my time. However, some of the problem files I have specified in the ‘Possible future preservation issues’ section in the Maintenance Plan could really do with being addressed straight away – or at least sooner than 2021 when I have specified the next Maintenance exercise should be carried out. I guess this is a dilemma which has to be addressed on a case by case basis. In THIS case, I’ve decided to just leave the points as they are in the Maintenance Plan so that they don’t get forgotten; but to possibly take a look at a few of them in the shorter term if I feel motivated enough.

The Conversion Slog

I’m glad to say I’ve nearly finished the long slog through the file conversion aspects of this digital preservation project. After dealing with about 900 files I just have another 50 or so Powerpoints and a few Visios to get through. It’s been a salutary reminder of how easily large quantities of digital material could be lost simply because the sheer volume of files makes for a very daunting task to retrieve them.

Below are a few of the things I’ve learnt as I’ve been ploughing through the files.

Email .eml files: These are mail messages which opened up fine in Windows Live Mail when I did the scoping work for this project. Unfortunately, since then I’ve had a system crash and Live Mail was not loaded into my rebuilt machine; and Microsoft removed all Live Mail support and downloads at the end of 2017. On searching for a solution on the net, I found several suggestions to change the extension to .mht to get the message to open in a browser. This works well, but unfortunately the message header (From, To, Subject, Date) is not reproduced. I ended up downloading the Mozilla Thunderbird email application, opening each email in turn in it, taking screenshots of each screenfull of message and copying them into Powerpoint, saving each one as a JPG, and then inserting the JPGs for all the emails in a particular category into a PDF document. A bit tortuous and maybe there are better ways of doing it – but at least I ended up with the PDFs I was aiming for.

Word for Mac 3.0 files: These files did open in MS Word 2007 – but only as continuous streams of text without any formatting. After some experimentation, I discovered that doing a carriage return towards the end of the file magically re-instated most of the formatting – though some spurious text was left at the end of the file. I saved these as DOCX files.

Word for Mac 4.0 & 5.0 and Word for Windows 1.0 & 2.0: These documents all opened up OK in Word 2007. However, I found that in longer documents which had been structured as reports with contents list, the paging had got slightly out of sync so that headings, paragraphs and bullets were left orphaned on different pages. I converted such files to DOCX format in order to have the option to reinstate the correct format in the future. Files without pagination problems, or which I had been able to fix without too much effort, were all converted to PDF.

PDF-A-1b: I have previously elected to store my PDF files in the PDF-A-1b format (designed to facilitate the long term storage of documents). However, on using the conformance checker in my PDF application (e-Copy PDF Pro) I discovered that they possessed several non-conformancies; and, furthermore, the first use of e-Copy PDF Pro’s ‘FIX’ facility does not resolve all of them. I decided that trying to make each new PDF I created conform to PDF-A-1b would take up too much time and would jeopardise the project as a whole. So, I included the following statement in the Preservation Maintenance Plan that will be produced at the end of the project: “PDF files created in the previous digital preservation exercise were not conformant to the PDF-A-1b standard, and the eCopy PDF Pro ‘FIX’ facility was unable to rectify all of the non-conformances. Consideration needs to be given as to whether it is necessary to undertake work to ensure that all PDF files in the collection comply fully with the PDF-A-1b standard.”

PowerPoint – for Mac 4.0. Presentation 4.0, and 97-2003: All of these failed to open with Powerpoint 2007, so I used Zamzar to convert them. Interestingly Zamzar wouldn’t convert to PPTX – only to Powerpoint 1997-2003 which I was subsequently able to open with Powerpoint 2007. So far, it has converted over 100 Powerpoints and failed with only four (two Mac 4.0 and two Presentation 4.0). The conversions have mostly been perfect with the small exception that, in some of the files, some of the slides include a spurious ‘Click to insert title’ text box. I can’t be sure that these have been inserted during the conversion process, but I think it unlikely that I would have left so many of them in place when preparing the slides. Zamzar’s overall Powerpoint conversion capability is very good – but I have experienced a couple of irritating characteristics: first, on several occassions it has sent me an email saying the conversion has been successful but then fails to provide the converted file implying that it wasn’t able to convert the file; and second, the download screen enables five or more files to be specified for conversion but if several files are included it only converts alternate files – the other files are reported to have been converted but no converted file is provided. This problem goes away if each file is specified on its own in its own download screen. The other small constraint is that the free service will only convert a maximum of 50 files in any 24 hour period – but that seems a fair limit for what is a really useful service (at the time of writing, the fee for the cheapest level of service was $9 a month).

UPDATED and ORIGINAL: I am including UPDATED in the file title of the latest version of a file, and ORIGINAL in earlier versions of the same file, because all files relating to a specific Reference No are stored in the same Windows Explorer Folder and users need to be able to pick out the correct preserved file to open. There will be only one UPDATED file – all earlier versions will have ORIGINAL in the file title. Another way of dealing with this issue of multiple file versions would be to remove all ORIGINAL versions to separate folders. However, this would make the earlier versions invisible and harder to get at, which may not be desirable. I believe this needs further thought – and the input of requirements from future users of the collection – before the best approach can be specified.

DOCX, PPTX and XSLX: When converting MS Office documents, unless I was converting to PDF, I elected to convert to the DOCX, PPTX and XLSX formats for two reasons – it is Microsoft’s future-facing format, and that – for the time being – it provides another way of distinguishing between files that have been UPDATED and those that haven’t.

Many of these experiences came as a surprise despite the amount of scoping work that was undertaken; and that is probably inevitable. To be able to nail down every aspect of each activity would take an inordinate amount of time. There will always be a trade off between time spent planning and the amount of certainty that can be built into a plan; and it will always be necessary to be pragmatic and flexible when executing a plan.

Retrospective Preservation Observations

Yesterday I reached a major milestone. I completed the conversion of the storage of my document collection from a Document Management System (DMS) to files in Windows Folders. It feels a huge release not to have the stress of maintaining two complicated systems – a DMS and the underlying SQL database – in order to access the documents.

From a preservation perspective, a stark conclusion has to be drawn from this particular experience: the collection started using a DMS some 22 years ago during which I have undergone 5 changes of hardware, one laptop theft and a major system crash. In order to keep the DMS and SQL Db going I have had to try and configure and maintain complex systems I had no in-depth knowledge of; engage with support staff over phone, email, screen sharing and in person for many, many hours to overcome problems; and backup and nurture large amounts of data regularly and reliably. If I had done nothing to the DMS and SQL Db over those years I would long ago have ceased to be able to access the files they contained. In contrast, if they had been in Windows folders I would still be able to access them. So, from a digital preservation perspective there can be no doubt that having the files in Windows Folders will be a hugely more durable solution.

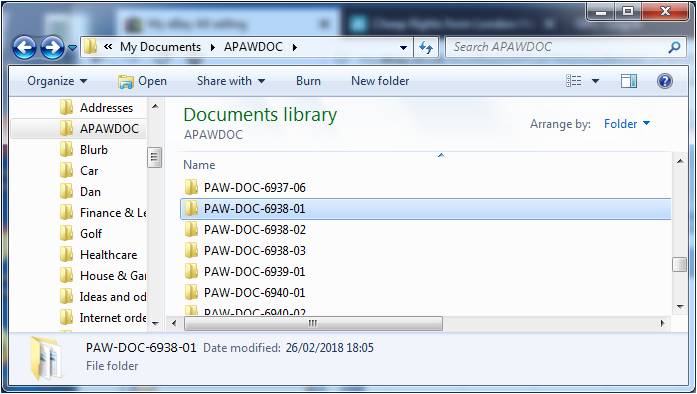

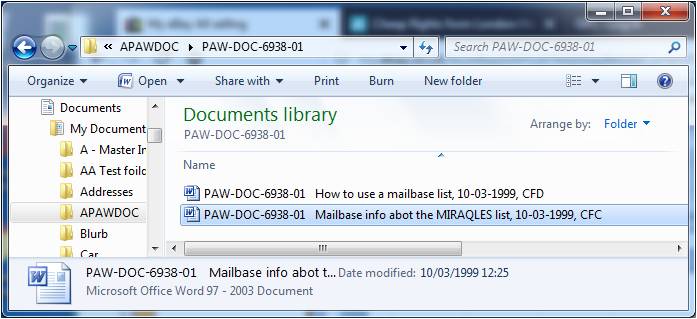

When considering moving away from a DMS I was concerned it might be difficult to search for and find particular documents. I needn’t have worried. Over the last week or so I’ve done a huge amount of checking to ensure the export from the DMS into Windows Folders had been error free. This entailed constant searching of the 16,000 Windows Folders and I’ve found it surprisingly easy and quick to find what I need. The collection has an Index with each index entry having a Reference Number. There is a Folder for each Ref No within which there can be one or more separate files, as illustrated below.

Initially, I tried using the Windows Explorer search function to look for the Ref Nos, but I soon realised it was just as easy – and probably quicker – to scroll through the Folders to spot the Ref No I was looking for. The search function on the other hand will come in useful when searching for particular text strings within non-image documents such as Word and PDF – a facility built into Windows as standard.

Initially, I tried using the Windows Explorer search function to look for the Ref Nos, but I soon realised it was just as easy – and probably quicker – to scroll through the Folders to spot the Ref No I was looking for. The search function on the other hand will come in useful when searching for particular text strings within non-image documents such as Word and PDF – a facility built into Windows as standard.

I performed three main types of check to ensure the integrity of the converted collection: a check of the documents that the utility said it was unable to export; a check of the DMS files that remained after the export had finished (the utility deleted the DMS version of a file after it had exported it); and, finally, a check of all the Folder Ref Nos against the Ref Nos in the Index. These checks are described in more detail below.

Unable to export: The utility was unable to export only 13 of the 27,000 documents and most of these were due to missing files or missing pages of multi-page documents.

Remaining files: About 1400 files remained after the export had finished. About 1150 of these were found to be duplicates with contents that were present in files that had been successfully exported. The duplications probably occurred in a variety of ways over the 22 year life of the DMS including human error in backing up and in moving files from off-line media to on-line media as Laptops started to acquire more storage. 70 of the files were used to recreate missing files or to augment or replace files that had been exported. Most of the rest were pages of blank or poor scans which I assume I had discovered and replaced at the point of scanning but which somehow had been retained in the system. I was unable to identify only 7 of the files.

Cross-check of Ref Nos in Index and Folders: This cross-check revealed the following problems with the exported material from the DMS:

- 9 instances in which a DMS entry was created without an Index entry being created,

- 9 cases in which incorrect Ref Nos had been created in the DMS,

- 6 instances in which the final digit of a longer than usual Ref No had been omitted (eg. PAW-BIT-Nov2014-33-11-1148 was exported as PAW-BIT-Nov2014-33-11-114),

- 3 cases in which documents had been marked as removed in the Index but not removed from the DMS,

- 2 cases in which documents were missing from the DMS export.

It also revealed a number of problems and errors within the 17,000 index entries. These included 12 instances in which incorrect Filemaker Doc Refs had been created, and 6 cases in which duplicated Filemaker entries were identified.

The overall conclusion from this review of the integrity of the systems managing the document collection over some 37 years, is that a substantial amount of human error has crept in, unobtrusively, over the years. Experience tells me that this is not specific to this particular system, but a general characteristic of all systems which are manipulated in some way or other by humans. From a digital preservation standpoint this is a specific risk in its own right since, as time goes by, as memories fade, and as people come and go, the knowledge about how and why these errors were made just disappears making it harder to identify and rectify them.

Started and Exported

A week ago the Pawdoc DP project started in earnest after 14 months of Scoping work. The Project Plan DESCRIPTION document and associated Project Plan CHART define a 5 month period of work in 10 separate sections. The Scoping work proved to be extremely valuable in ensuring as far as possible that the tasks in the plan are doable and of a fixed size. No doubt there will be hiccups but they should be self contained within a specific area and not affect the viability of the whole project.

It took rather longer than anticipated to get the m-Hance utility to a position where it can be used to export the PAWDOC files – though I guess such delays are typical in these kinds of transactions. First there was an issue around payment caused by the m-Hance accounting system not being able to cope with a non-company which could not be credit checked. I paid up front and the utility was released to me once the payment had gone through the bank transfer system. After that there followed a period of testing and some adjustment using the export facility WITHOUT deletion in Fish. At that point I finalised the Plan and the Schedule and started work. However, although it was believed that the utility was working as it should, there followed a frustrating week during which its operation to export WITH delete (needed so that I could check any remaining files) kept producing exception reports and the m-Hance support staff produced modified versions of the utility. There’s an obvious reminder here that nothing can be assumed until you try it out and verify it. Anyway, all is well now and the export WITH delete completed successfully late last night. I decided against re-planning to accommodate the delays in running it in the belief that I can make up the time in the course of the three weeks planned to check the output from the export.

Principles, Assumptions, Constraints, Risks

The export utility to move the PAWDOC files out of the Fish document management system and into files residing in Windows Explorer folders, has been completed by the Fish supplier, m-Hance. Broadly speaking, it will deliver files with a title which starts with the Reference Number; then has three spaces followed by the file description that I originally input to Fish (truncated after 64 characters); and ending with the date when the file was originally placed in Fish. I have already received the utility documentation which provides full instructions of how to install and run it and am confident I know what to do. So all that remains is for me to receive the utility (which I expect early next week) and to give it an initial test run on the PAWDOC collection in Fish.

I’ve already created a full draft of the Project Plan Description document and the Project Plan Chart, so the test run will inform me of any final changes that I need to make to the plan. After that, all that will be left to do is to fix an overall start date and then to insert the start and end dates for each task.

One part of the Project Plan Description that was of particular interest to construct was the section on Principles, Assumptions, Constraints and Risks. Since some of them really require expert digital preservation knowledge and experience – commodities which I don’t have – I’ve sent these out to my colleagues Matt Fox-Wilson, Jan Hutar, and Ross Spencer in the hope that they will let me know of any serious errors of judgement that I may have made. The text of the section I sent them is shown below:

Principles

The Principles below have been followed in the construction of this Project Plan, and will be applied throughout the performance of the project:

- No action will be taken which will increase the cost or effort required to maintain the collection

- Backup, disaster recovery and process continuity arrangements are considered to be significant factors in ensuring the longevity of a collection and will therefore be included as an integral part of this preservation project plan.

- All Preservation actions on individual document files will be undertaken after the files have been transferred out of Fish into stand-alone files in Windows folders, so that a substantial number of transferred documents will be subjected to detailed scrutiny thereby improving the chances of identifying any generic errors that may have occurred in the transference process.

Assumptions

The Assumptions below have been followed in the course of constructing this Project Plan.

It is assumed that:

- The analysis of the files remaining in Fish after the ‘Export and Delete’ utility has been run, will take no longer than three weeks elapsed time.

- There is no publicly available mechanism to convert Microsoft Project (.mpp) files earlier than version 4.0.

- There is no publicly available mechanism to convert Lotus ScreenCam (.scm) files produced earlier than mid 1998.

- Application and configuration files that were included in the collection do not need to be able to run in the future as they do not contain content information. The mere presence of the files in the collection is sufficient.

- The zipping of a website is currently the easiest and most effective way of storing it and providing subsequent easy access.

- Versions of Microsoft Excel Word from 1997 onwards are not in immediate danger of being unreadable and therefore require no preservation work. Earlier versions are best converted to the latest version of Excel that is currently possessed – Excel 2007.

- Versions of Microsoft Word for Windows from 6.0/1995 onwards are not in immediate danger of being unreadable and therefore require no preservation work. Earlier versions, including those for Macintosh, are best converted to the latest version of Word that is currently possessed – Word 2007.

- Versions of Microsoft PowerPoint from 1997 onwards are not in immediate danger of being unreadable and therefore require no preservation work. Earlier versions, including those for Macintosh) are best converted to the latest version of PowerPoint that is currently possessed – PowerPoint 2007

- None of the versions of HTML, including those pre-dating HTML 2.0, are in immediate danger of being unreadable; and therefore no preservation work is required on any of the Collection’s HTML files.

Constraints

This project may be limited by the following constraints:

- Some of the disks and zipped files in the collection contain huge numbers of files of various types and organised in complex arrangements. To address the preservation requirements of these particular items could delay the project indefinitely. Therefore no attempt will be made to undertake preservation work on these items; but, instead, a note will be included in section 3 of the Preservation Maintenance Plan (Possible future preservation issues).

- Disks that can’t be opened must remain in the Collection in physical form only.

- No automated tools are available for undertaking conversions of large numbers of files; and the use of macros has been discounted as being too error-prone and risky. Therefore, all the Preservation work defined in this Project Plan has to be undertaken manually by a single individual.

Risks

There is a risk that:

- The Zamzar service may be unable to convert some of the files submitted to it, despite tests having been completed successfully.

Mitigation: record the need to take further actions on specified files in the future, in section 3 of the Preservation Maintenance Plan

- The analysis of the files remaining undeleted after the Fish file export has taken place, may throw up unexpected issues and may take much longer than anticipated. Mitigation: After two and a half weeks work on this activity, the issues will be recorded in a document, and the need to address the issues in the future will be recorded in section 3 of the Preservation Maintenance Plan.

Final Planning underway

Since about last April, I’ve been planning various aspects of the project to preserve my PAWDOC document collection. This has included:

- Deciding what to do with zip files

- Analysing problem files identified by the DROID tool

- Figuring out how to deal with files that won’t open

- Investigating all the physical disks associated with the collection including backup disks

All of this work has now been completed, and a clear plan identified for each individual item that requires some preservation work.

In parallel, I have been exploring the possibility of moving the collection’s documents out of the Document Management System it currently resides in (Fish), to standard windows application files residing in Windows Explorer folders. This has included detailed planning of the structure of the target files, and of the process that would have to be undertaken to achieve the transformation. The Fish supplier has recently told me that a utility to undertake this move is now available, and I have confirmed that I want to go ahead with this approach. We are now entering a phase of detailed testing and further planning to verify that this is a viable and sensible way forward. Should no significant obstacles be identified, I anticipate being ready to undertake the move out of the Fish system sometime in January 2018.

Since the bulk of the planning work has now been completed, it has been possible to assemble a draft Preservation Project Plan CHART which itemises each piece of work that will be required. Using this is a base, and incorporating the outcome of the work on the utility with the Fish supplier, I shall start to assemble the overall Preservation Project Plan Description document, and to allocate timescales and effort to each task on the plan.

Dealing with Disks

One very specific aspect of digital Preservation is ensuring that the contents of physical disks can be accessed in the future. I found I had four types of challenges in this area: 1) old 5.25 and 3.5 disks that I no longer have the equipment to read; 2) a CD with a protected video on it that couldn’t be copied; 3) two CDs with protected data on them that couldn’t be copied; and 4) about 120 CDs and DVDs containing backups taken over a 20 year period. My experiences with each of these challenges are described below:

1) Old 5.25 and 3.5 disks: I looked around the net for services that read old disks and I eventually decided to go with LuxSoft after making a quick phone call to reassure myself that this was a bona fide operation and the price would be acceptable. I duly followed the instructions on the website to number and wrap each disk, before dispatching a package of 17 disks in all (14 x 5.25, 2 x 3.5, 1 x CD). Within a week I’d received a zip file by email of the contents of those disks that had been read and an invoice for what I consider to be a very reasonable £51.50. The two 3.5 disks and 1 CD presented no problems and I was provided with the contents. The 5.25 disks included eight which had been produced on Apple II computers in the mid 1980s and these LuxSoft had been unable to read. I was advised that there are services around that can deal with such disks but that they are very expensive; and that perhaps my best bet would be to ask the people at Bletchley Park (of Enigma fame) who apparently maintain lot of old machines and might be willing to help. However, since these disks were not part of my PAWDOC collection and I didn’t believe there was anything particularly special on them, I decided to do nothing further with them and consigned them to the loft with a note attached saying they could be used for displays etc. or destroyed. Of the six 5.25 disks that were read, most of the material was either in formats which could be read by Notepad or Excel, or in a format that LuxSoft had been able to convert to MS Word, and this was sufficient for me to establish that there was nothing of great import on them. However, one of 5.25 disks (dating from 1990), contained a ReadMe file explaining that the other three files were self-extracting zip files – one to run a communication package called TEAMterm; one to run a TEAMterm tutorial; and one to produce the TEAMterm manual. Since this particular disk was part of the PAWDOC collection (none of the other 5.25 disks were), I asked LuxSoft to do further work to actually run the self-extracting zips and to provide me with whatever contents and screen shots that could be obtained. I was duly provided with about 30 files which included the manual in Word format and several screen shots giving an idea of what the programme was like when it was running. LuxSoft charged a further £25 for this additional piece of work, and I was very pleased with the help I’d been given and the amount I’d been charged.

2) CD with Protected Video files: This CD contained files in VOB format and had been produced for me from the original VHS tape back in 2010. The inbuilt protection prevented me from copying them onto my laptop and converting them to an MP4 file. After searching the net, I found a company called Digital Converters based in the outbuildings of Newby Hall in North Yorkshire which charged a flat rate of £10.99 + postage to convert a VHS tape and to provide the resulting MP4 file in the cloud ready to be downloaded. It worked like a dream: I created the order online, paid the money, sent the tape off, and a few days later I downloaded my mp4 file.

3) CDs with protected data: I’d been advised that one way to preserve the contents of disks is to create an image of them – a sector-by-sector copy of the source medium stored in a single file in ISO image file format. This seemed to be the best way to preserve these two application installation disks which had resisted all my attempts to copy and zip their contents. After reading reviews on the net, I decided to use the AnyBurn software which is free and which is portable (i.e. it doesn’t need to be installed on your machine – you just double click it when you want to use it). This proved extremely easy to use and it duly produced image files of the two CDs in question in the space of a few minutes.

4) Backup CDs and DVDs: The files on these disks were all accessible, so I had a choice of either creating zip files or creating ISO image files. I chose to create zips for two reasons: first, I wanted to minimise the size of the resulting file and I believe that the ISO format is uncompressed; and, second, on some of the disks I only needed to preserve part of the contents and I wasn’t sure if that can be done when creating a disk image.

Having been through each of these 4 exercises, there are some general conclusions that can be drawn:

- The way to preserve disks is to copy their contents onto other types of computer storage.

- The file size capacities of old disk formats are much smaller than the capacities of contemporary computer storage formats. For example, none of the 5.25 disks contained files totalling more than 2 Mb; the CDs contain up to about 700 Mb; and even the DVDs contain no more than 4.7 Gb. In an era where 1Tb hard disks are commonplace, these file sizes aren’t a problem.

- There are three stages in preserving disk contents; first, just getting the contents from the disk onto other storage technology; second, being able to read the files; and third, should the contents include executables, being able to actually run the programs.

- The decision about whether you want to achieve stages 2 or 3 will depend on whether you think the contents and what they will be used for, merit the extra effort and cost involved. In the case of the 5.25 disk containing TEAMterm software described above, providing a capability to run the application would have involved finding an emulator to run on my current platform and getting the programme to work on it. I judged that to be not worth the effort for the purpose that the disk’s contents were being preserved for (to be a record of the artefacts received by an individual working through that stage of the development of computer technology).